Many of you, my readers, already know what System Center Operations Manager (SCOM) is. But did you really know what it is?

Note, this article assume the readers have deep knowledge about SCOM platform mechanics. If you’re looking for a rocket boost in your SCOM knowledge, subscribe to my “SCOM in 5 minutes” series. First lesson is at: https://maxcoreblog.com/2021/04/05/scom-platform-in-5-minutes-part-1-health-model/.

If I ask a random person, whiteout any exposure to SCOM, they can quickly google for it, and come with an answer: “It’s a monitoring platform from Microsoft.“. And this is indeed the right answer.

Then I can ask a person, who uses SCOM in their environment, and perhaps, has installed a couple of additional management packs. That person can answer: “It’s an extendable monitoring platform from Microsoft.”. And this is again the correct answer.

Now, I go a bit further, and ask a more experienced person, who just installed any of the authoring tools (MP Author/MP Studio, VSAE, or Management Pack Creator, see links at the end of this article) and successfully created their first management pack (MP), their can answer like “SCOM is an extendable monitoring platform from Microsoft with open SDK, which let anyone extend SCOM functionality and add more monitoring features.“. Which is the correct answer again.

But I’m going to go much further and declare the following: “SCOM is an object oriented database, featuring user-defined classes, class inheritance, class relationship, and instance lifecycle control. This is topped with class instance version control and change history.” How did I come to a such conclusion? Consider:

- You can define a new class in a management pack using XML schema. This is “user-defined classes“.

- You can base your class on an existing class and inherit parent class properties and relationships. This is “class inheritance“.

- You can define containment and reference relationships between your new class and any other classes. This is “class relationship” feature.

- Now, you can define hosting relationship between classes. Hosting relationship implements the “instance lifecycle control” feature — it instructs the DB to delete all nested instances when deleting top instance when these instances are bound with hosting relationship. (Think about a computer and its disks as an example: disk class instances are hosted on computer class instance. Thus when the top level computer class instance is being deleted, then all disk class instances are deleted too).

- Finally, all changed into class instance properties are timestamped, source-marked, and version history is stored in Data Warehouse. This is the “version control and change history” feature.

To manipulate object in the DB you can use either SCOM Connector Framework, to insert/update/delete class instances programmatically, or use special workflow type called “Discovery”.

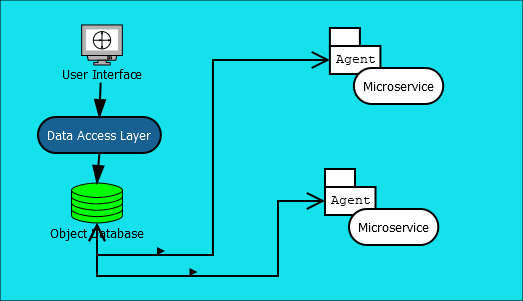

I’m taking another step deeper and add another statement to the above declaration: “SCOM is an object oriented database, coupled with distributed micro-service platform featuring node zero-touch configuration.”. To support my statement, consider the following (assume Health Service aka SCOM Agent is already installed):

- All scripts, libraries, executables, and resources are delivered to each node automatically (as a part of management pack synchronization).

- Workflows can be manually, dynamically, or programmatically set to run at any node (i.e. SCOM Agent): workflows, such as data sources, probe and write actions, are building parts for rules and monitors. Rules and monitors target particular class and they are executed by all agents, which manage any instances of the target class.

- Workflows can be configured from the central database: monitors and rules have configuration, class instances have properties, and configuration can be overridden. These parameters together create an individual configuration for each node’s workflow configuration.

- Workflows can send and receive data to other workflow using standard or custom data item structures.

- Data chunks can be uploaded or downloaded via Agent Tasks (upload) and the “System.FileUploadWriteAction” write action (download).

And the final step is to be made in a slightly different direction: “SCOM in an object oriented database, which controls distributed micro-service platform, coupled with pluggable centralized UI control platform.”. Here I refer to the SCOM Console application. Using SCOM you may notice, that adding and removing management packs make changes into SCOM Console look. This is not just applicable to the “Monitoring” section adding or removing more standard views, but also the “Authoring” and “Administration” sections. Say, if you remove all Unix/Linux MPs, then the Discovery Wizard will drop the “Unix/Linux” computer button, leaving with Windows Computer and Network Device only. In fact, almost everything in the SCOM Console application is a plugin from a management pack. So, the final definition will be:

System Center Operations Manager is a distributed and scalable micro-service platform controlled by an object-oriented database. The platform features central management UI extendable with custom forms, dashboards, and other reusable control elements.

Of cause, the platform was invented with its primary function as to be a monitoring systems, so there are some limitations from this fact. For instance, data flow graph is a star, not full mesh (i.e. data upload and download is between central management servers and agents, not between any agents). However, the platform creators has much further that just a monitoring platform.

Now the question is: “What I can do on SCOM platform using the new understanding of its capabilities?“.

Well, almost anything, for example, any data collection service is a perfect example. Consider a large numbers of different data source appliances distributed across the globe in private networks. These appliances have API to pull the data, but they cannot be published to the Internet, so you need a secure way to deliver data from the appliances and also easy way for appliance network configuration. To solve this problem you can:

- Install Internet-facing gateway(s) in the central SCOM deployment.

- Install data collection SCOM agents/gateways next to each appliance.

- Connect the data collection agents/gateways (#2) to your SCOM management group via the public GW (#1). Use strong TLS 1.2 encryption and certificate-based mutual authentication for secure connection.

- Create a custom management pack containing:

- Custom UI form to register source appliances and bind each appliance object with its managing agent or gateway. Creatable appliance objects will contain all configuration required for appliance connection, such as names or IP addresses.

- Leverage SCOM credential handling mechanism to securely store and delivery appliance credentials (if required).

- Data collection and upload probe action, and a rule targeting appliance objects. This rule will pull data and ship it to the central SCOM management group.

- Receiving rule. This rule runs at the management servers and receive the collected data. Optionally, the same rule can insert the received data into a DB or perform any data processing.

That’s it! Congratulations, your data collection infrastructure is ready to be deployed around the world and start delivering data into your HQ.

For a more complex example, I can say, that I could re-write… System Center Configuration Manager (SCCM)! I won’t go into much details, but at least, implementing its basic function for application deployment is very easy:

- Create rules and UI to configure some agents as distribution points by installing and auto configuring IIS Role. These rules to target computer-hosted Distribution Point (DP) class.

- Make set of rules and UI to distribute content between DPs.

- Each hosted application is an object inserted via Connector Framework.

- Application deployment to a target computer can be a programmatically created monitor targeting all computers, but enabled only where deployment is enabled. Monitor configuration has all information on where to download and how to install the application.

- Monitor state will be compliance indicator for this application.

Wonderfully written! This is exactly the view of SCOM that is missing and yet so much deserved. Thank you so much and I hope you get time to continue the articles in the “SCOM Platform in 5 minutes” section.

LikeLike